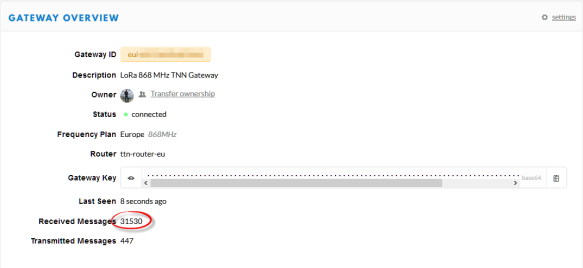

My LoRaWAN gateway (“Contributing an IoT LoRaWAN Raspberry Pi RAK831 Gateway to The Things Network” is running and working great now for more than a month and it already has transmitted more than 30k messages:

This creates a lot of log entries on the micro SD card of the Raspberry Pi. To avoid writing too many times log data, I have installed Log2Ram.

By default, the Raspberry Pi uses a micro SD card as storage device. The SD card as a FLASH device is subject of ‘wear-out’: each FLASH block can only be erased and written a limited number of time, and after that it fails. The ‘wear leveling’ of the disk device will allocate and use a new flash block in that case, but after some time there are no free blocks any more and the system will fail.

A running gateway will create lots of log messages stored on the card, and I have read reports that depending on the card and card size it might fail after 6-18 months.

As most of the data written as a gateway are the Linux log files, there is a solution to reduce the number of writes to the SD card using Log2Ram. It creates the mount point /var/log in RAM, so any writes to /var/log will be written to the RAM disk. Every hour, a cron job will write the RAM disk to FLASH, or at the time of a shutdown. So this greatly reduces the stress on the SD card.

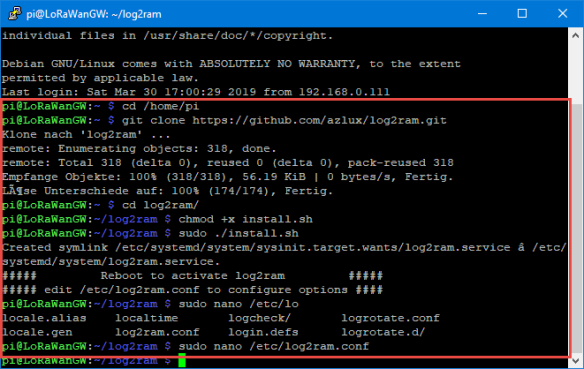

Installation and setup is easy:

Go to the pi home directory:

cd /home/pi

Clone there the log2ram git repository:

git clone https://github.com/azlux/log2ram.git

Change directory to the downloaded repository folder:

cd log2ram

Make the installation script executable:

chmod +x install.sh

Install it:

sudo ./install.sh

Change the log size value to 128M:

sudo nano /etc/log2ram.conf

Save the file (CTRL-X, yes, ENTER), then reboot:

sudo reboot

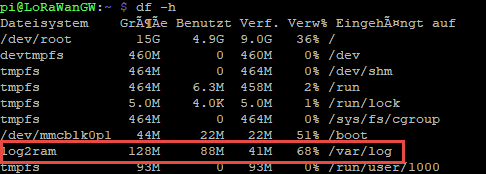

To check if it was working, use

df -h

This should show the new disk:

In addition to this, check with

mount

which should show our mount point:

log2ram on /var/log type tmpfs (rw,nosuid,nodev,noexec,relatime,size=131072k,mode=755)

That’s it! With this, writing to FLASH should now be greatly reduced. Time for muffins!

Happy Logging 🙂

Hi Erich,

Are you using any hardware / software on your Pi to avoid SD card corruption if the power gets interrupted?

Thanks, Ian

LikeLike

Power outage is a very rare event in Switzerland. I power the Pi with PoE which is attached to a UPS. But I’m think about adding a small Pi UPS, that way it can shutdown the Pi with a pin. It is possible to assign a pin which can be used to power down the Linux: I’m using that already with a Kinetis K22 (tinyK22) board.

LikeLike

Pingback: Give Your Raspberry Pi SD Card a Break: Log to RAM | Hackaday

Hi Eric,

You mention a cron job that can write the logs from RAM to SD card every hour, but I don’t see any setup for that.

Is this something automatically set up, or it has to be defined in addition?

LikeLike

See the install.sh: it installs cron job files:

# cron

install -m 755 log2ram.hourly /etc/cron.hourly/log2ram

install -m 644 log2ram.logrotate /etc/logrotate.d/log2ram

LikeLike

Just a note that it now seems to set it daily, not hourly, by default when running install.sh.

LikeLike

David,

just wondering how you observed this?

LikeLike

That was recently changed in https://github.com/azlux/log2ram/commit/bd38f34a257c596d5d9898b2baefd4b2992392f4 Older installations are not affected unless you upgrade.

LikeLike

If I have logging turned off can I comment out the cron job files without any problems? I have an RPI3B running on a 2GB card and just installed this program. Thanks.

LikeLike

I would not turn off the cron job files unless you know what they are doing and you really don’t need them.

LikeLike

Are you aware of any actual SD card failures in a Raspberry Pi? Statistics? I’ve had one running continuously since December 2012 with extensive logging to an 8GB card with no problems. I was persuaded to not use RasPi in a commercial product because the SD would be unreliable, but I’m not sure that’s the case with quality (SanDisk or Samsung) cards.

LikeLike

I had one case of a home automation server where after 6 months the SD card was dead. I did not investigate much, but that server was writing logs all the time.

There is a report here: https://durdle.com/2017/01/04/how-not-to-kill-your-pis-sd-card/

So I see things with 3-18 months, no scientific data. Of course it depends on the card quality/brand and the amount/frequency of logging.

LikeLiked by 1 person

I’m running openHAB on my Raspberry Pi, and SD card failure is a big talking point in the user community. Statistics would be difficult to get, since most people just plug in a new SD without ever reporting the failure, but there are enough people claiming it that I think the risk is real. As you say, using higher-quality cards probably mitigates that risk.

LikeLike

Howdy Erich, I just tried this on my Pi Zero W that is Running FlightRadar24 and it didn’t seem to create the mount for it. The GIT pulled and it seemed to install then reboot, then I edit the selected file but after rebooting there is not an entry under `df -h` for log2ram.

LikeLike

I suggest you run the steps in the install.sh line by line to see if someting of it fails.

LikeLike

OKay, I went through that as `sudo -i` for each line but saw no error messages. Are there any logs to look at to see what might be going wrong?

LikeLike

Is the service running?

See as well https://github.com/azlux/log2ram#it-is-working, the log is written to /var/log/log2ram.log.

Other than that, you might file a ticket on https://github.com/azlux/log2ram/issues

LikeLike

Great job, this is a really good idea and perfect implementation. Thank you! I’ll have this on all my Raspberries for sure.

LikeLike

Have a look at https://github.com/StuartIanNaylor/zram-config as it has a number of advantages over Log2Ram.

Some problems with Log2Ram :-

Firstly the hourly write out of logs is near pointless as its only there for a full system crash as is written on stop. Its near pointless as the log info of a unlikely full system crash is still likely to be lost unless you where fortunate enough that it happened as the hourly write had just taken place.

Also any log that has changed gets copied in full on every hour which sort of negates it purpose and strangely its for no real reason

Also it stores all the oldlogs in memory which isn’t required but copies all this on start again for no real reason.

zram-config above does zswap, zram & a zlog but in a much better manner.

It uses zram in the upper of an OverlayFS mount with the original dir in a lower bind bind so because of the copyup of COW only changes are in zram.

The same principle is used for zdir and large directories with zero writes in extremely small memory footprints can be accomplished.

It also uses the olddir directive of logrotate and ships oldlogs to persistant and again saves far more ram than log2ram.

You can create any number of zswaps, zdir and a zlog via /etc/ztab and will greatly reduce sd write whilst using a much smaller memory than Log2ram and is much less likely for /var/log to hit the limit and fail as log2ram often does.

LikeLike

There were so many better ways to express this comment than “the code you used is crap, mine is much better.”

LikeLiked by 1 person

I think, as the number of sectors written to the sdcard is NOT reduced by writing them at another time (e.g. delayed, grouped or whatever), the wear level doesn’t get any better.

I think, you have to throw away data to reduce wear leveling.

So I think, you have to disable the writes to sdcard completely or may be sent the logs to a remote syslog server or via email or similar (but don’t save a temporary file).

Delaying and grouping writes is used to increase performance on disks where access time is significant (= hard disks). It is also useful to reduce power dissipation in some cases.

Also, consider something like laptop-mode-tools…

LikeLike

Agreed: the less data the better. On the writes it depends how they are cached (or synced with the disk). So if there is a small amount of data, but the data is flushed/written all the time as it happens with the log files, this will greatly increase the wear of the disk.

LikeLiked by 1 person

Pingback: Tutorial: MCUXpresso SDK with Linux, Part 1: Installation and Build | MCU on Eclipse

You are right writing a lot of small data chunks to your sd card limits the life time because of the sd card block size.

A better way is to just increase the commit rate of the file system

/etc/fstab

/dev/sda5 / ext4 defaults,noatime,commit=60 0 1

default is 5 seconds

If you set a to a value of 60 – 300 a normal sd card should life forever in your system.

LikeLike

ah, did not know about that one. Good suggestion!

LikeLike

Do I add this line to /etc/fstab ? or am I modifying a line already in the file?

I already have a similar line in there:

/dev/mmcblk0p7 / ext4 defaults,noatime 0 1

LikeLike

You have to append

commit=60

to your options, separated by a comma. See as well https://unix.stackexchange.com/questions/155784/advantages-disadvantages-of-increasing-commit-in-fstab

LikeLike

Pingback: Building a Raspberry Pi UPS and Serial Login Console with tinyK22 (NXP K22FN512) | MCU on Eclipse

sadly, that disk would not appear.

retried all the lines manually (with sudo) no effect.

checking with crontab -e (and sudo crontab -e)it turns out nothing was written there, but that should not matter for the install.

Ended up using the fstab commit

LikeLike

FYI, the install steps have change today. (https://github.com/azlux/log2ram#with-apt-recommended)

I’ve setup a personal repository. Making update easier.

LikeLike

thanks for that update!

LikeLike

Is it possible to have /var/log never be written to the disk (and just discarded on shutdown)?

LikeLike

yes, see the documentation of log2ram (refresh time).

LikeLike

After running Log2ram disk for a couple of weeks in noticed that df -h will always give 100% on use.

Is this normal?

Shouldn’t the crone job flush the ramdisk every hour?

crontab -l will not show me anything regarding Log2ram.

What do I miss here?

LikeLike

no, that’s not normal. I’m running log2ram for months and it does not show this. df -h only shows around 60% usage for me.

LikeLike

Hello Erich. Thank you for this informative and useful article. I installed it today and noted that you may have missed a step – or at least missed one that linux experts might not be aware of. The file log2ram.conf is in the directory /home/pi/log2ram after running the installation script. Therefore, there should be an extra step inserted after installation of log2ram

cp /home/pi/log2ram.conf /etc/.

then

Change the log size value to 128M:

etc.

LikeLike

Hi Bill,

I think something went wrong with your installation, because that configuration file gets copied by the installation script (see https://github.com/azlux/log2ram/blob/master/install.sh, line 12) already into /etc/log2ram.conf.

PS: my LoRaWAN Gateway Raspberry Pi is running since March 2019 on that same SD card and using Log2Ram without any issues. 🙂

LikeLike

I use RPi 4 for a kiosk. SD card failed within weeks. I disabled SD card writes using a very simple method and it’s been working fine ever since. Also added a 15 second watch dog timer.

To disable writes to SD Card:

At the GUI, click on Pi–>Preferences–>Raspberry Pi Configuration

Go to the “Performance” tab and you’ll see an option “Overlay File System.” Click the “Configure…” button.

Select “Overlay: Enabled” and “Boot Partition: Read-only.”

Click “OK” and wait while the system works. It may take a minute or more to complete. This is normal.

Reboot when prompted

Source: https://learn.adafruit.com/read-only-raspberry-pi

To enable the WDT,

1) Enable the hardware watchdog on your Pi and reboot

sudo su

echo ‘dtparam=watchdog=on’ >> /boot/config.txt

reboot

After this reboot the hardware device will be visible to the system. The next steps install the software side of this to communicate with the watchdog.

2) Install the watchdog system service

sudo apt-get update

sudo apt-get install watchdog

3) Configure the watchdog service

sudo su

echo ‘watchdog-device = /dev/watchdog’ >> /etc/watchdog.conf

echo ‘watchdog-timeout = 15’ >> /etc/watchdog.conf

echo ‘max-load-1 = 24’ >> /etc/watchdog.conf

4) Enable the service

sudo systemctl enable watchdog

sudo systemctl start watchdog

sudo systemctl status watchdog

Now next time your Raspberry Pi freezes, the hardware watchdog will restart it automatically after 15 seconds.

If you want to test this you can try running a fork bomb on your shell:

sudo bash -c ‘:(){ :|:& };:’

WARNING Running this code will render your Raspberry Pi unaccessible until it’s reset by the watchdog.

LikeLiked by 1 person

Hi Brad,

*very* useful, thanks for sharing!

LikeLike